Unmatched Performance for Local AI

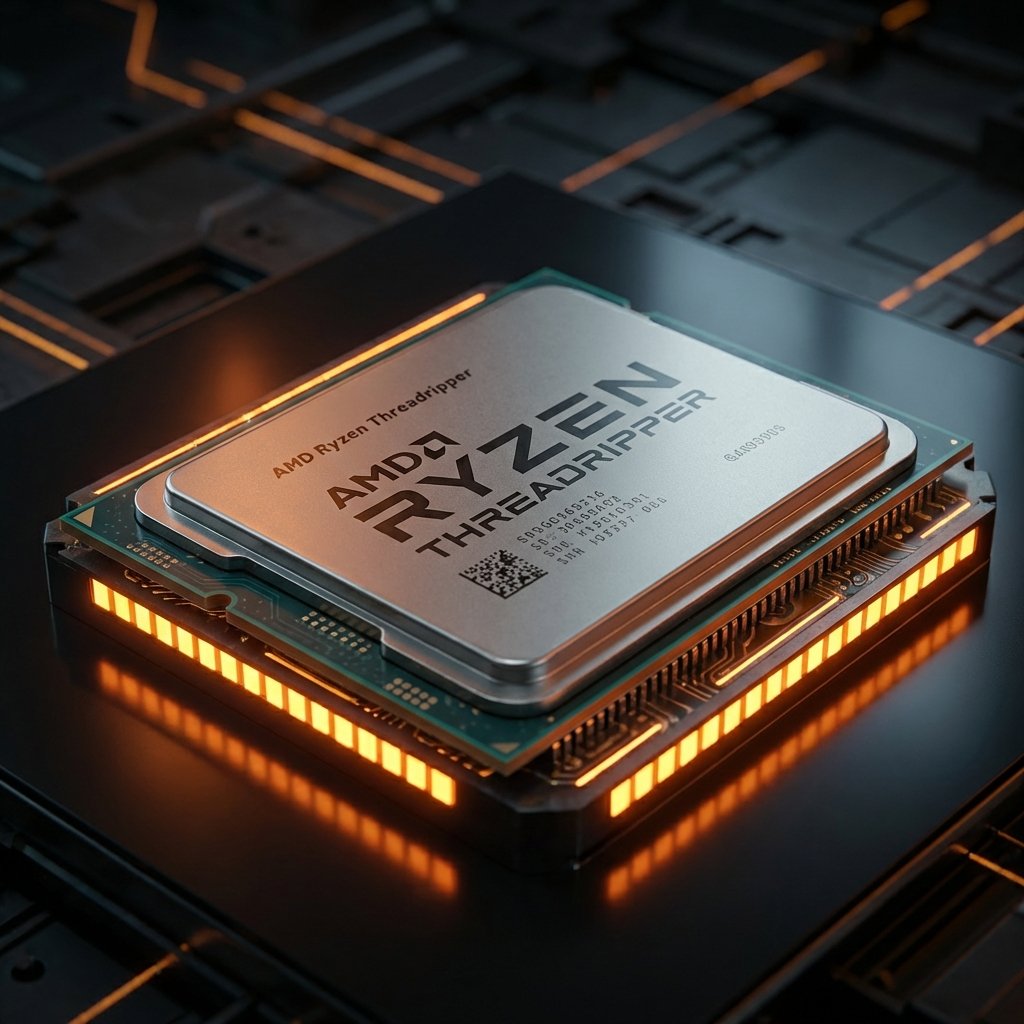

Experience unprecedented AI processing power with the AMD Ryzen Threadripper 7970X. This isn't just a PC. This is the AI compouter of the future.

Experience unprecedented AI processing power with the AMD Ryzen Threadripper 7970X. This isn't just a PC. This is the AI compouter of the future.

Storm Peak 4.0GHz 32-Core sTR5 Processor. The ultimate engine for multi-modal AI workflows.

24GB GDDR7 PCIe 5.0. Train and Run LLMs locally with massive VRAM.

Crucial T500 4TB Gen 4 NVMe + Inland Platinum 2TB SATA SSD. Lightning fast speeds for datasets.

Trinity Performance 360mm AIO Liquid Cooling. Silence meets performance.

Deploy powerful tools in a click. Experiment freely on a secure platform.

Containerization

Development

Runtime

Machine Learning

Deep Learning

IDE

Local LLMs

Integration

Drop-in API

Dive into a suite of powerful AI tools for art, music, video, learning & more. Every app is ready to launch in moments, running securely on your Ryzen Force AI machine to keep your work private.

Image Gen

Audio Gen

Video Gen

LLM Chat

3D Suite

Streaming

Stable Diffusion

Simple SDG

Text Gen WebUI

Easy Chat

Ryzen Force AI makes self-hosting accessible to everyone, whether you have a home lab for remote work or a vetted IT professional. Manage tasks, automate routines, and organize information with tools you actually own.

Automation

Database

CMS

Team Chat

Knowledge

Storage

1000W power supply ensures stable performance under any workload. Train large language models on your own hardware without cloud dependencies. Process video, audio, and text simultaneously with zero latency.

Optimized for both Windows 11 and our custom RyzenForce Linux OS. Switch between them anytime.

Full compatibility with professional AI tools, CUDA acceleration, and enterprise software.

Our custom open-source distro optimized for AI workloads. Maximum performance, minimal overhead.

See what the community is building with RYZENFORCE. Check out our latest benchmarks and guides.

Read the Blog

"Local

LLMs allow

users to run models directly on their own devices, eliminating the need for continuous internet connectivity

and avoiding privacy concerns that arise from using third-party cloud services.

Here are some benefits

of

using local LLMs…"

Support the project, donate to our Bitcoin Address

3JgncMj7Qsh7k2pyvwqfvhao6PgHqkrEw2

From developer AI lab to enterprise inference cluster — each build runs Ollama, LM Studio, PyTorch, and the full ROCm stack out of the box.